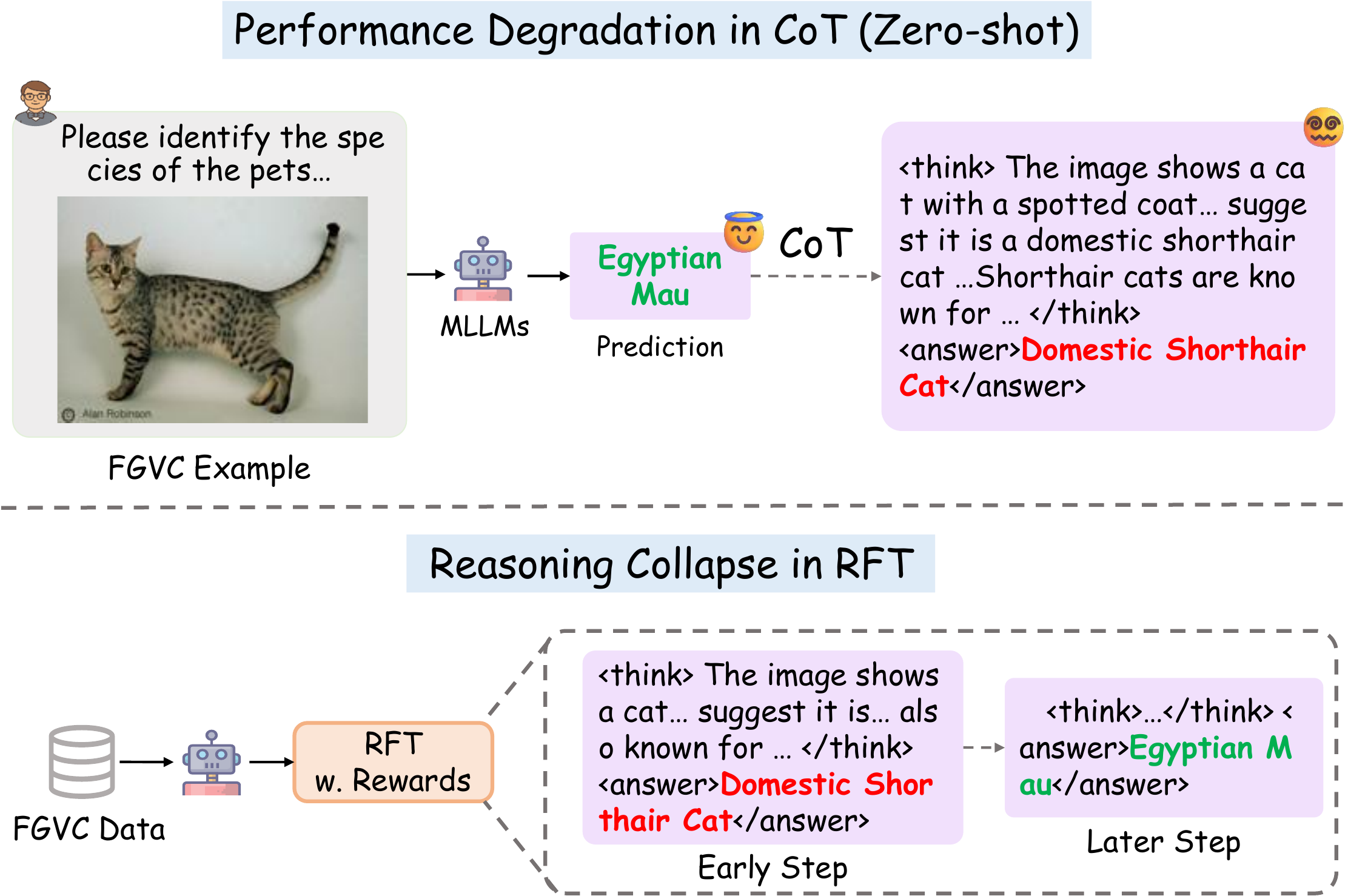

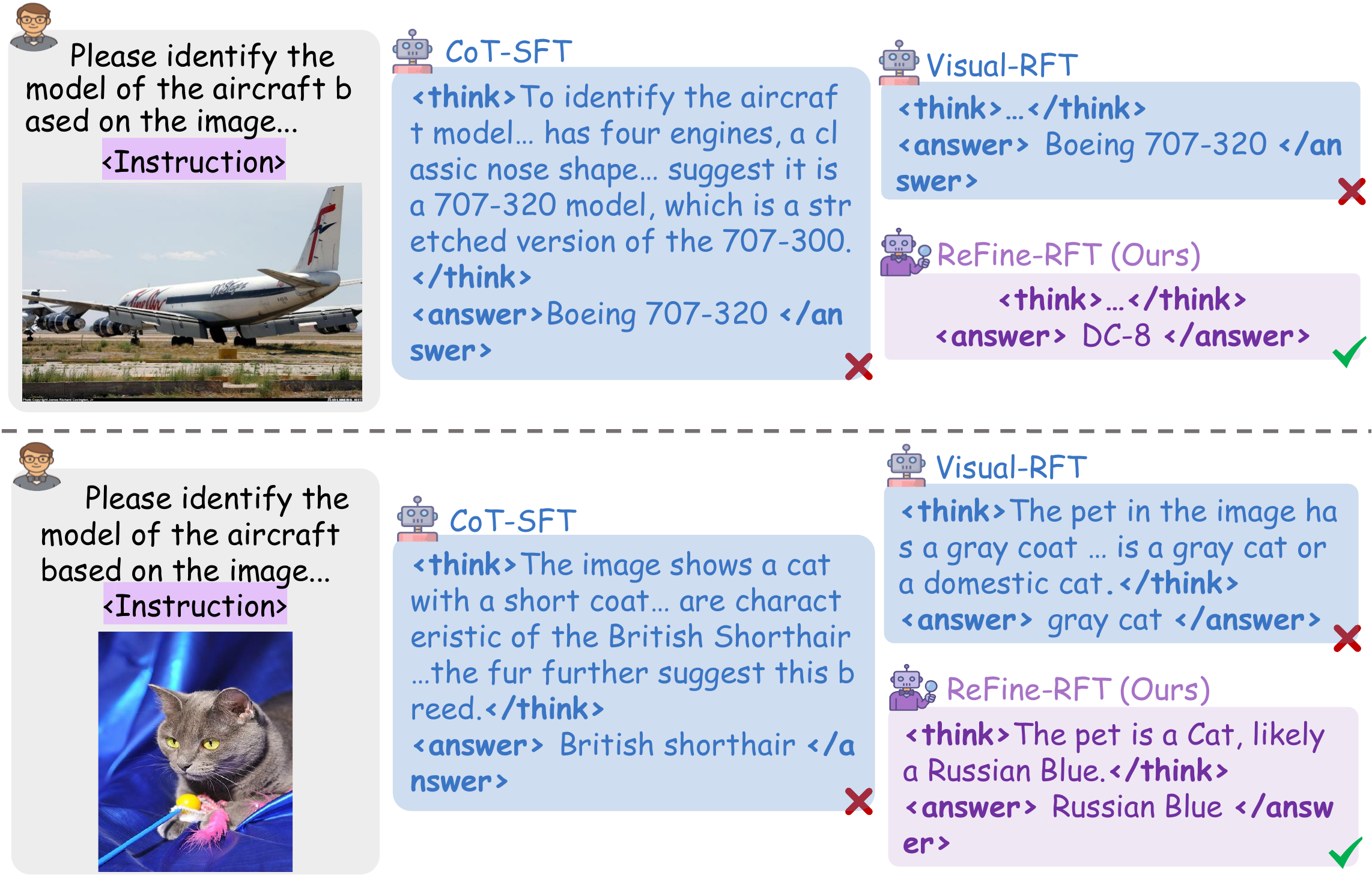

Multi-modal large language models (MLLMs) still struggle on Fine-Grained Visual Classification (FGVC),

where subtle visual discrimination is essential. Chain-of-Thought (CoT) reasoning is widely adopted to boost

performance on challenging tasks, yet prior works have shown it can harm visual perception accuracy.

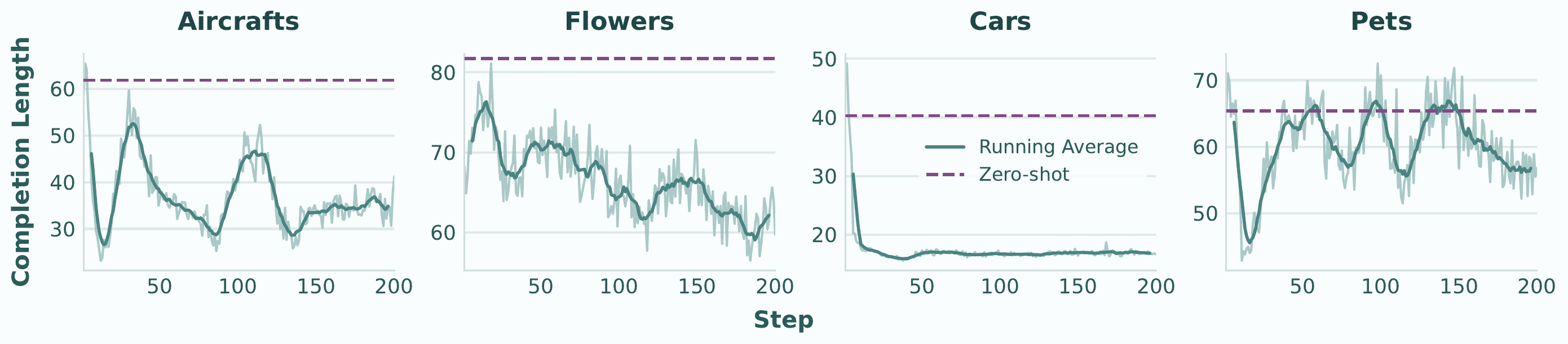

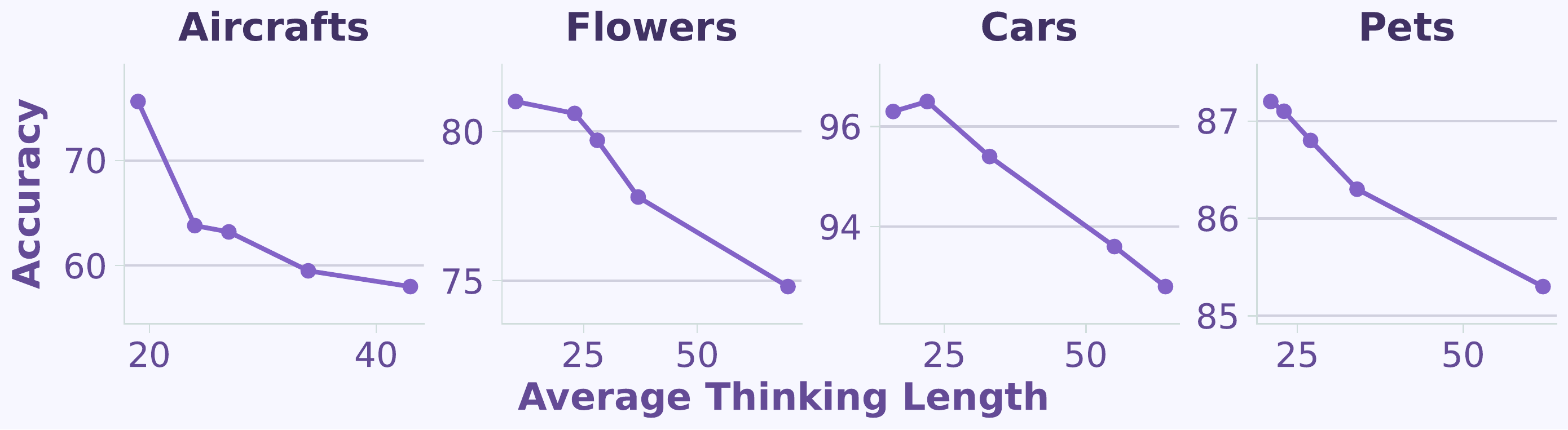

We systematically re-examine the role of textual reasoning in FGVC across zero-shot and RFT settings,

uncovering a central paradox: reasoning length is the key factor — longer CoT consistently

lowers classification accuracy, a phenomenon we term the "Cost of Thinking".

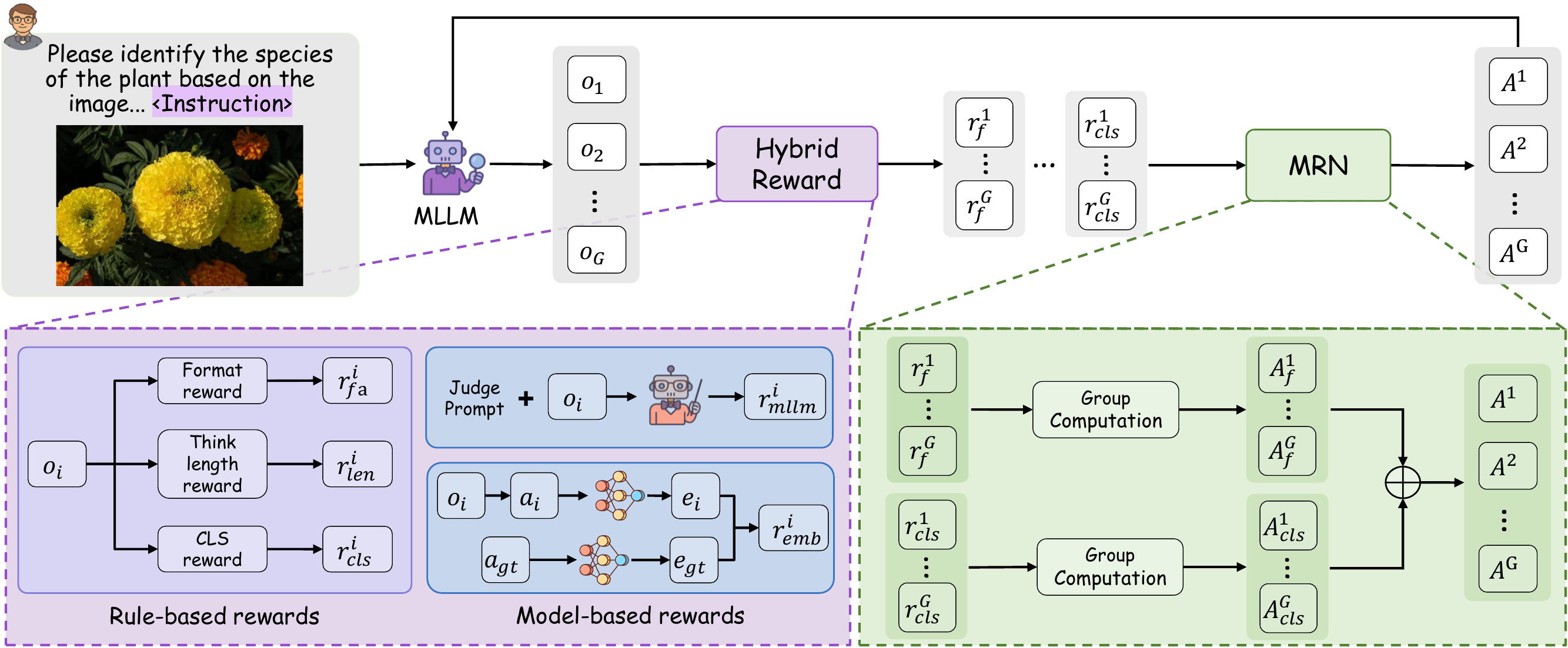

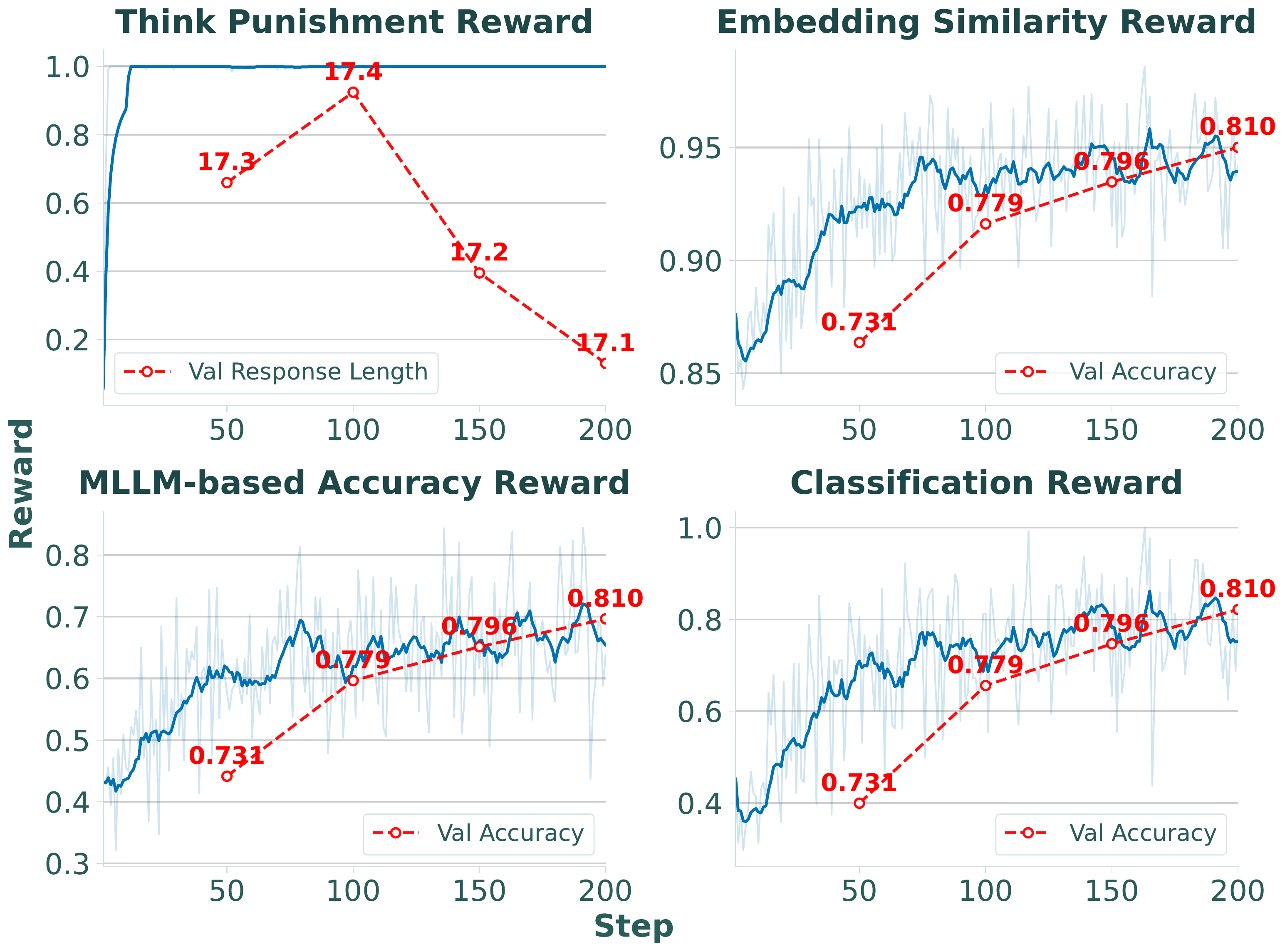

Building on this, we propose ReFine-RFT, combining an ensemble reward with

Multi-Reward Normalization (MRN) to constrain reasoning length while providing dense,

accuracy-centric feedback. Extensive experiments achieve state-of-the-art results across FGVC benchmarks.

1

"Cost of Thinking"

Verbose CoT systematically degrades MLLM performance on fine-grained perception — thinking length, not CoT quality, is the key factor.

2

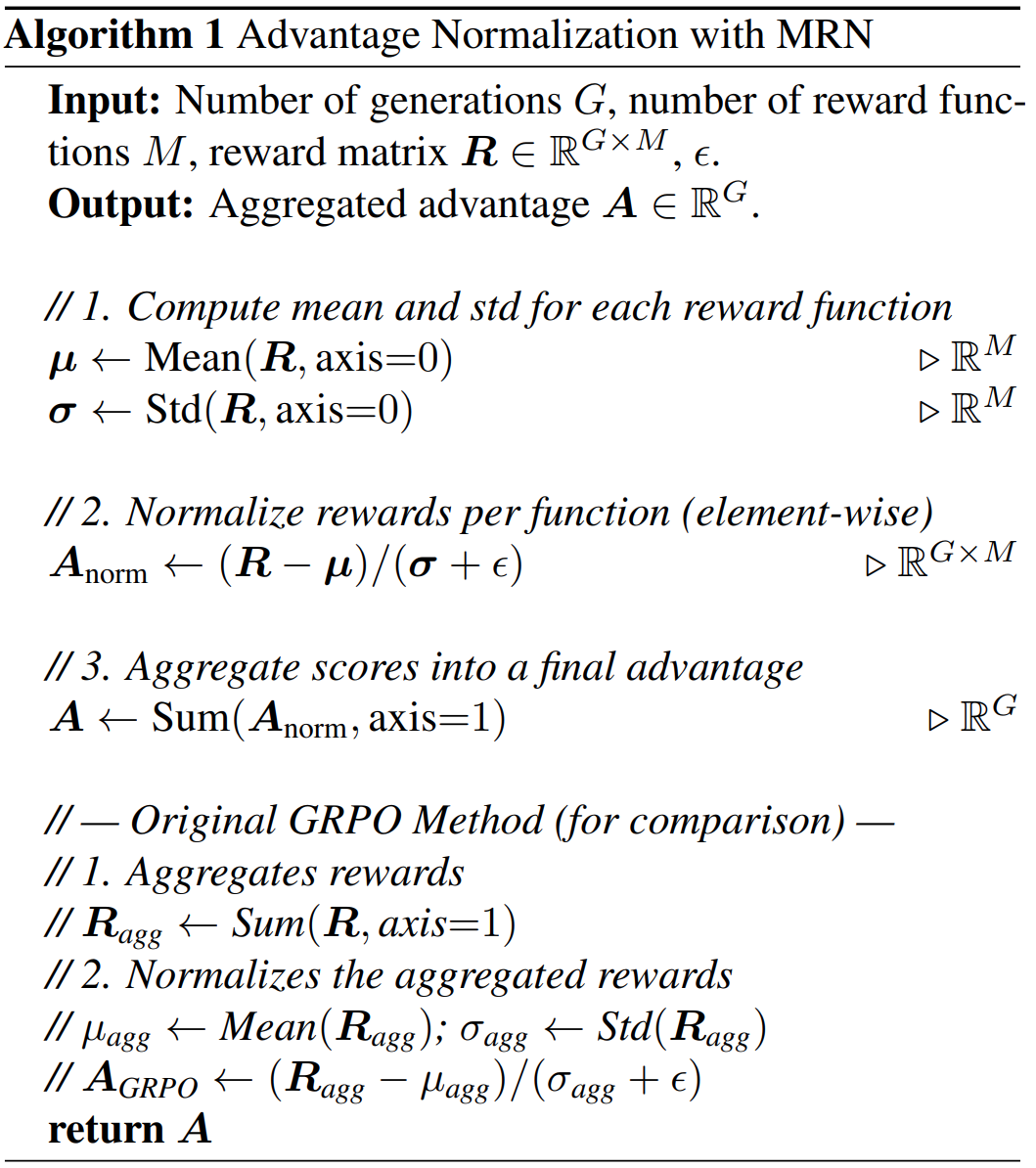

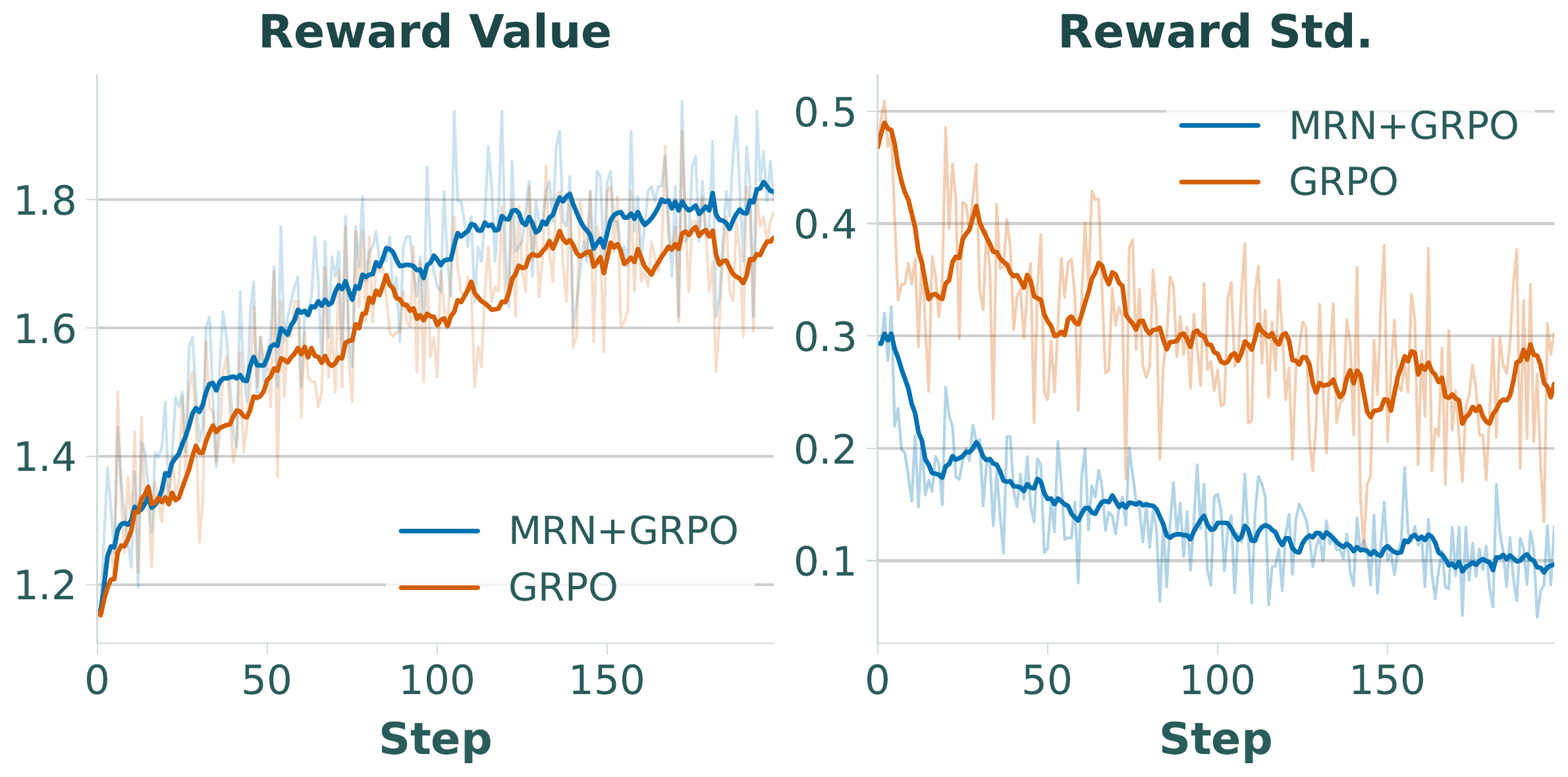

Multi-Reward Normalization (MRN)

A plug-and-play normalization module that independently normalizes heterogeneous reward signals before aggregation, stabilizing multi-objective RFT training.

3

ReFine-RFT Framework

Integrates MRN with an ensemble reward (format, accuracy, thinking length, MLLM-based, embedding similarity) to optimize visual accuracy while controlling reasoning length.

4

State-of-the-Art on FGVC

ReFine-RFT achieves 86.5% average accuracy (+3.6% vs Visual-RFT) on four FGVC benchmarks with only a 2B backbone in 4-shot settings.